UC San Diego’s Department of Cognitive Science promotes the study of learning, perception, action, and interaction in the physical, digital, and cultural worlds. We offer a range of cognition-related majors along with a unique minor in Design.

Our interdisciplinary vision draws from anthropology, computer science, linguistics, neuroscience, philosophy, psychology, sociology, and more. Our students often get jobs in technology, medicine, and research.

Follow our Instagram and Facebook, then learn more here!

PROGRAMS AND RESEARCH

UNDERGRADUATE STUDIES

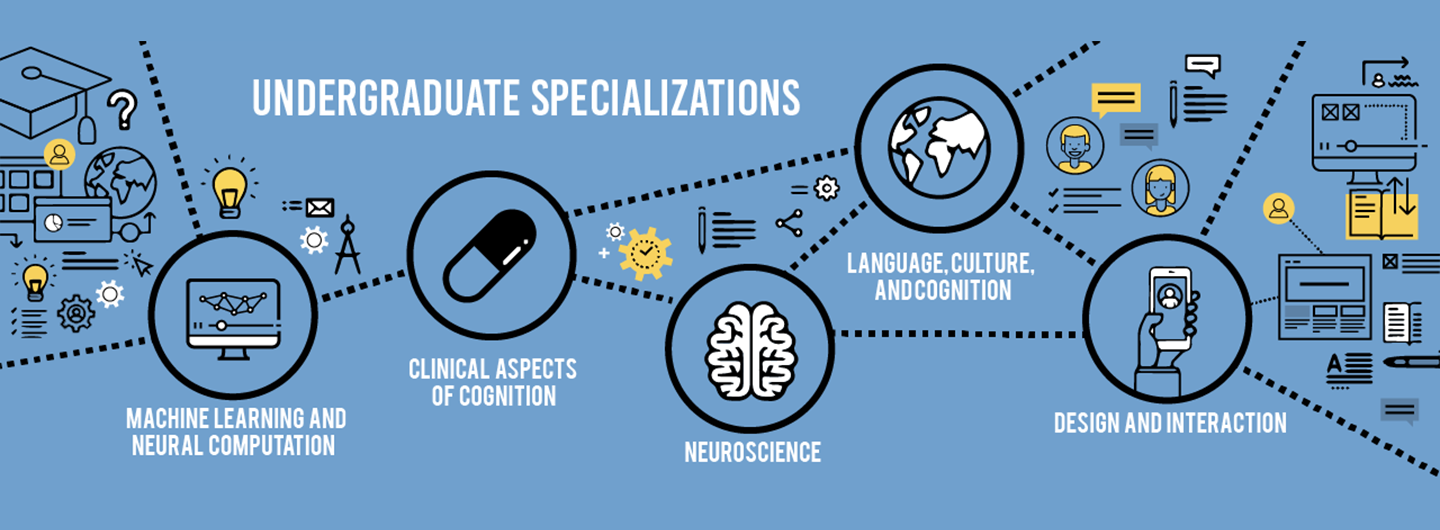

Our major prepares students for many kinds of jobs related to AI, data science, information technology, product design, medicine, and scientific research.

GRADUATE STUDIES

Multidisciplinary study of cognition with emphasis on computer science, linguistics, neuroscience, psychology, and related aspects of anthropology, biology, mathematics, philosophy, and sociology.

RESEARCH

The underlying philosophy of the department challenges faculty and students to be knowledgeable in and sympathetic to a wide variety of fields and techniques.

Cognitive Science Event Calendar

Take a look at our CogSci calendar to find upcoming events, webinars, and more!

EVENTS

Are you an alum of the department?

Get involved or help us support the efforts of a new generation!